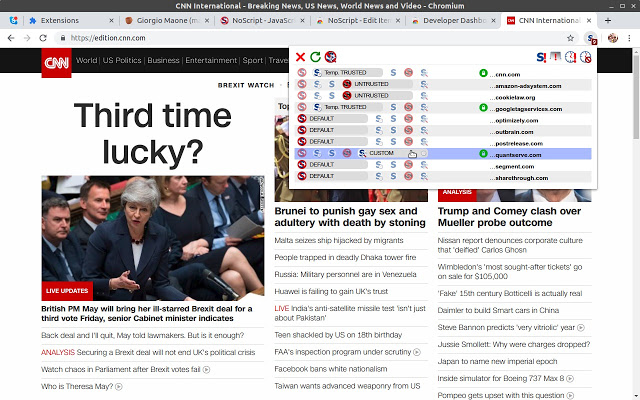

Use something like a GreaseMonkey script or similar to modify the DOM and remove the noscript part.This won't protect you from tracking pixels that are delivered from the same domain you're visiting though. You could e.g use RequestPolicy and set the scope accordingly. You already seem to be aware of a tool-set that could do that. Block all Request from foreign domains.The solutions I can see in this matter (right now) are the following: This is also nothing your browser does "behind your back" and you're probably more concerned about the third party that delivered that graphic than your browser. A browser is unaware of the actual data that is being loaded from a URL just by looking at it and as such would not able to detect a one by one tracking pixel before actually loading it. The article you linked in one of your comments is, in my opinion, about a different topic than your question though.Īs far as the stated scenario in your question is concerned, it's the normal operation of a browser to request resources that are part of page. You're correct that certain browsers are doing more than some would like them to be. I'm not sure where your actual concern is with the information of whenever or not your browser has JavaScript enabled or not. Checking the extension's console, I see no "Passing. The JavaScript is only getting called for the specific tracking URL. Likewise, a red failure line in the Network tab.Īlso, the URL pre-filtering is working well. The page's console log has an error message net::ERR_BLOCKED_BY_CLIENT for the image fetch. The extension worked fine on the page in question. Its only role is to highlight the extension's entry on the chrome://extensions page. Or delete it and let the browser supply a dummy one. "description": "Keeps the browser from fetching tracking URLs",Ĭonsole.log("Website Blocker is running!") See chrome.webRequest for a description of the Chrome browser's API for watching, modifying, or blocking requests in flight. In client/ReactDOM.I don't know of way to turn off evaluation of, but in the above example, you could write a short browser extension to cancel any request with a url containing the substring /tr?. Would this be doable in the new codebase? Either way it should be a separate issue. While it's really really awesome that React 16 now handles, if we want a really great story around noscript, I’d say automatically removing nested noscript-tags as per suggestion in Nested renders invalid HTML #6204 is key.The proposed solution to ignore the content of noscript-tags on the client is perfect (as long as we still keep the existing content in them).(If JS crashes before bucket has been set - Catch error and replace all relevant noscript-tags with divs and set their innerHTML with the innerHTML of the noscript-tag).Use dangerouslySetInnerHTML with the above.Instead of renderToStaticMarkup - Read existing textContent/innerHTML of the noscript-tag.Remove all nested noscript-tags in the resulting string (quite common for us since we also use noscript-fallbacks for lazy-loaded images).Render default component with renderToStaticMarkup (including using a couple of Providers to make Redux and react-router work).It could be as easy as but to make this work fully we have had to: Providing a noscript-fallback for conditional functionality that is determined on the client might be a bit niche but shouldn’t be that uncommon either.

Often we still want to provide a default variant (in a noscript-tag) for people without JS, or as graceful degradation in cases where JS has crashed. We use this mainly for A/B-testing for cases where we want to determine the bucket on the client and render a component only after that.

I've been tracking this issue since #1252 and #6204 so I'll chime in with our current use-case and some thoughts (long post but some background is necessary I think).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed